Seasonal Shifts in Plant Diversity Effects on Above-Ground-Below-Ground Phenological Synchrony

Ana E. Bonato Asato, Claudia Guimaraes-Steinicke,

Gideon Stein, Berit Schreck, Teja Kattenborn, Anne Ebeling, Stefan Posch,

Joachim Denzler,

Tim Büchner,

Maha Shadaydeh, Christian Wirth, Nico Eisenhauer, and Jes Hines

Journal of Ecology, Jan 2025

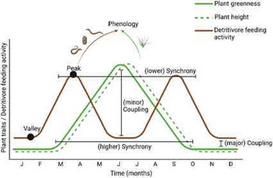

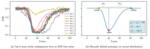

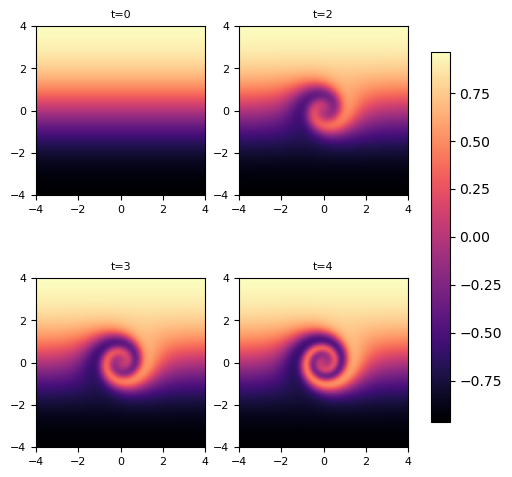

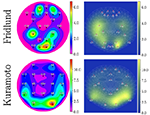

The significance of biological diversity as a mechanism that optimizes niche breadth for resource acquisition and enhancing ecosystem functionality is well-established. However, a significant gap remains in exploring temporal niche breadth, particularly in the context of phenological aspects of community dynamics. This study takes a unique approach by examining plant phenology, which has traditionally been focused on above-ground assessments, and delving into the relatively unexplored realm of below-ground processes. As a result, the influence of biological diversity on the synchronization of above-ground and below-ground dynamics is brought to the forefront, providing a novel perspective on this complex relationship. In this study, community traits (including plant height and greenness) and soil processes (such as root growth and detritivore feeding activity) were meticulously monitored at 2-week intervals over a year within an experimental grassland exhibiting a spectrum of plant diversity, ranging from monocultures to 60-species mixtures. Our findings revealed that plant diversity increased yearly plant height, root growth and detritivore feeding activity, while enhancing the synchrony between above-ground traits and soil dynamics. Soil microclimate also played a role in shaping the phenology of these traits and processes. However, plant diversity and soil microclimate on above-ground traits and soil dynamics effects varied considerably in strength and direction across seasons, indicating a nuanced relationship between biodiversity, climate and ecosystem processes. Notably, observations during the growing season unveiled a sequential pattern wherein peak plant community height preceded the onset of greenness. Meanwhile, root production commenced immediately after leaf senescence and persisted throughout winter. Although consistent throughout the year, detritivore activity exhibited pronounced peaks in the summer and late fall, albeit with notable variability. Synthesis. The study underscores the dynamic interplay between plant diversity, above-ground–below-ground phenological patterns and ecosystem functioning. It suggests that plant diversity modulates above-ground–below-ground interdependence through intricate phenological dynamics, with the degree of synchrony fluctuating in response to the varying combination of processes and seasonal changes. Thus, by providing comprehensive within-year data, the research elucidates the fundamental disparities in phenological patterns across shoots, roots and soil fauna activities, thereby emphasizing the pivotal role of plant diversity in shaping ecosystem processes.

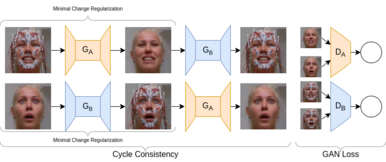

Modifying Generative Distributions in Latent Diffusion Models to Improve Alignment with Desired PropertiesIn International Conference on Machine Vision and Applications (MVA), 2025

Modifying Generative Distributions in Latent Diffusion Models to Improve Alignment with Desired PropertiesIn International Conference on Machine Vision and Applications (MVA), 2025 Measuring and Visualizing Volumetric Changes Before and After 10-Day Biofeedback Therapy in Patients with Synkinetic Facial Palsy Using 3D Video Recordings [Abstract]In Congress of the Confederation of European ORL-HNS, 2024

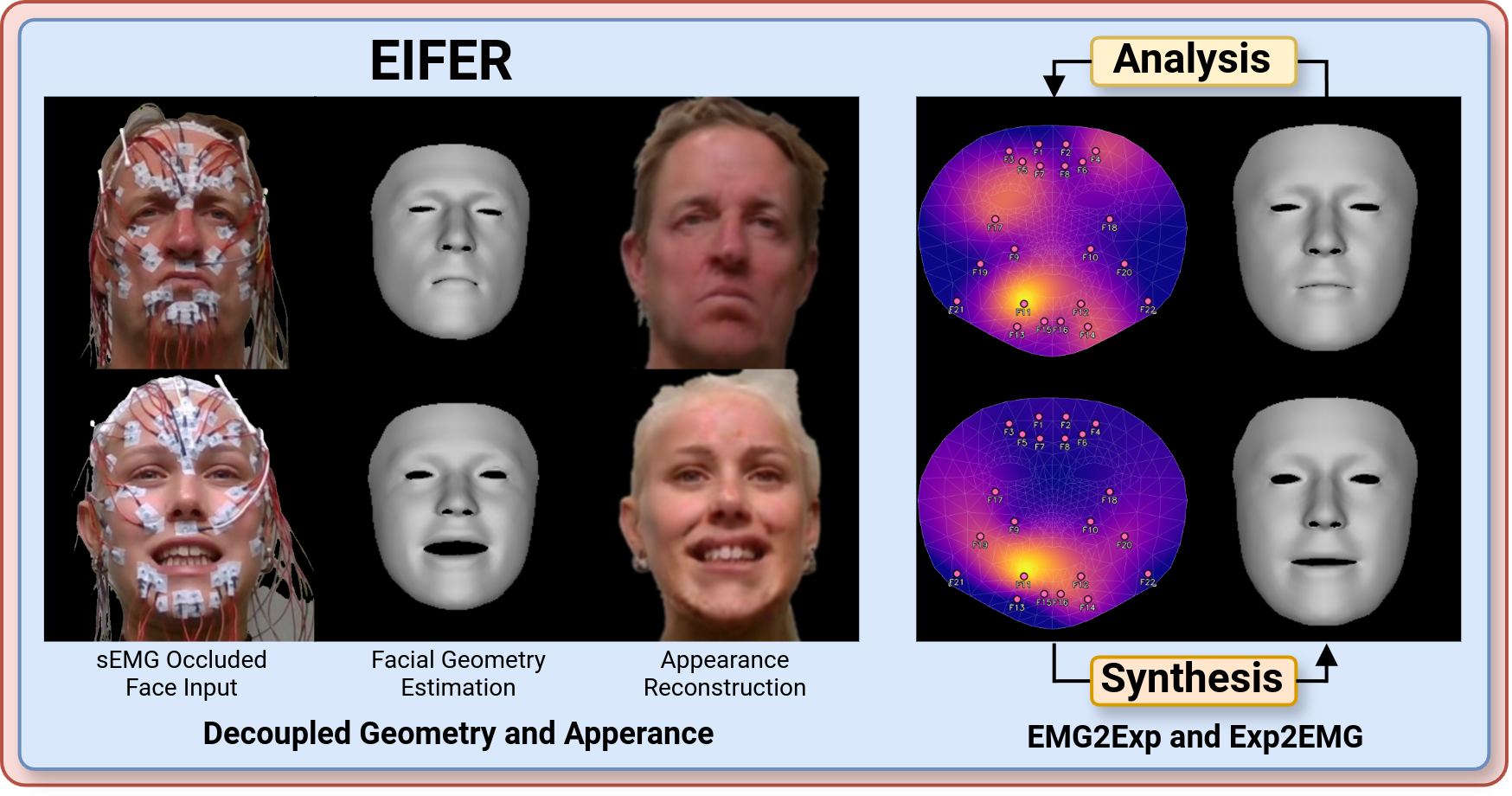

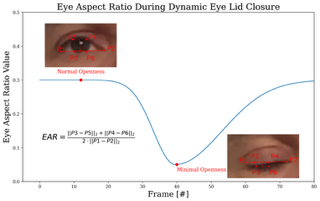

Measuring and Visualizing Volumetric Changes Before and After 10-Day Biofeedback Therapy in Patients with Synkinetic Facial Palsy Using 3D Video Recordings [Abstract]In Congress of the Confederation of European ORL-HNS, 2024 Reducing the Gap Between Mimics and Muscles by Enabling Facial Feature Analysis during sEMG Recordings [Abstract]In Congress of the Confederation of European ORL-HNS, 2024

Reducing the Gap Between Mimics and Muscles by Enabling Facial Feature Analysis during sEMG Recordings [Abstract]In Congress of the Confederation of European ORL-HNS, 2024